Simulation Puts You Anywhere You Want to Be

Michael Sorrenti

I help companies design products people can’t stop using | Creative Technologist | Product design & AI Advisory | Builder for Disney, ESPN, Mattel, Marvel & Nickelodeon | Founder, Game Pill

Teleportation as we imagine it does not exist, so you cannot step into a glowing tube and reappear on a spaceship or inside your office across town. That kind of magic is still locked inside movies and comic books.

But here is the part that nobody tells you. Something that looks a lot like teleportation is already here, and it is working right now in hospitals, oil rigs, car factories, and disaster zones. We are not moving people through the ether, but we are moving something even more valuable across thousands of miles in less time than it takes to blink.

What is actually happening is this: we are building live digital versions of real places and systems, feeding them real-time data from sensors, and using them to see, test, and interact with those places without physically being there.

In other words, instead of moving people, we are replicating environments in software and letting humans operate through those replicas as if they were on site.

Think about what that actually means. A surgeon in one city can operate on a patient in a different city.

An engineer sitting in a Houston office can inspect an oil platform in the middle of the ocean.

A car company can test a new factory layout without building a single wall or moving a single machine.

None of these things involve disappearing matter or science fiction, but all of them achieve the same practical result as teleportation. Distance stops being a problem, and the physical location of your body no longer determines what you can see, touch, or fix.

The same engines that power first person shooters and fantasy role playing games are now running the digital twins of oil refineries and assembly lines. Engineers realized that game engines handle moving objects, realistic physics, and multiple people sharing the same virtual space. Those are exactly the features you need to build a living digital copy of the real world.

Platforms like NVIDIA Omniverse now take live data from sensors, mixing it with artificial intelligence, and add physics simulation to create something amazing. The result is a digital version of a real place that updates itself constantly. When something changes in the real world, the simulation changes too. When you run a test in the simulation, you can see exactly what would happen in reality before you spend any money or take any risks. This is not just software but a new way of working, and it is quietly making distance irrelevant.

How Video Game Engines Built the Future of Industry

More than 300 companies around the world are now using NVIDIA Omniverse to build digital twins of their operations, including giants like Siemens, BMW, BP, and Chevron. These are not small startups playing with toys but massive industrial companies that move real products and manage real risks. They have all decided that the best way to work on the physical world is to first build a copy of it inside a computer.

BMW Group was one of the early believers, and the results have been stunning. The company built a complete digital twin of its entire production system covering more than 30 factories around the world. Production planners used to spend several weeks walking real vehicles through real production lines to check for problems, a process that required weekend work and sometimes even draining giant tanks of primer just to test one vehicle. Today the same check happens in three days inside a simulation, which is a reduction of more than 90 percent in testing time. The simulation is actually more thorough because it can check every possible angle that a human would miss.

BP took the same approach with its offshore oil platforms in the Gulf of America. The company built a digital twin of its Argos platform, so engineers in Houston can now inspect equipment as if they were standing on the platform itself. In one project, BP needed to inspect 300 valves on the platform, and using the digital twin cut the inspection time by 50 percent. No helicopters, no rough seas, and no overnight stays on a platform. Just an engineer sitting at a desk, moving through a digital copy of the real world, doing work that used to require a dangerous and expensive trip.

Virtual Surgery and Digital Twins: How Simulation Is Transforming Medicine

Imagine you need a rare and difficult surgery, but the best doctor for that specific problem lives two thousand miles away. In the old world, you would have to travel to that doctor or hope that someone local had the same skills. In the new world, the doctor comes to you through a screen and a set of robotic tools, and that is not a promise for the future but something happening right now. Surgeons are performing operations across state lines and even across countries using robotic systems that translate their hand movements into precise actions on a patient far away.

Training has changed just as dramatically, because a young doctor can practice a rare procedure that only happens once a year in real life. In a simulation, that same procedure can happen every single morning before breakfast, and the doctor makes mistakes in the simulation without anyone getting hurt. By the time a real patient needs that procedure, the doctor has already done it hundreds of times, and distance to the best training no longer matters because the simulation comes to the doctor.

Hospitals are also using digital twins to plan complex surgeries as a team. The team builds a digital copy of the patient’s own body using CT scans and MRIs, so they can see the exact shape of the patient’s organs and try different approaches before making a single cut. By the time the team enters the real operating room, they have already performed the operation many times in the digital world, which means the real surgery goes faster and the patient spends less time under anesthesia.

AI and Digital Twins: The New Energy Revolution Behind BP and Chevron

The BP story is exciting. The Argos platform is a massive floating facility in the Gulf of America, and getting there requires a helicopter ride or a long boat trip that weather can cancel for days.

But the digital twin of Argos lives on servers in Houston, so any engineer with permission can visit it instantly, walk through the digital platform, check on equipment, and run simulations of future problems.

The real platform keeps pumping oil while the digital platform runs experiments.

BP has also added artificial intelligence to the mix, and the AI watches the digital twin to learn how to predict failures before they happen. It notices small changes in temperature or vibration that a human might miss, and when it spots a potential problem, it alerts the engineers so they can fix it before anything breaks. This is like having a mechanic who can see the future, and it is making offshore oil production safer and more reliable.

@Chevron has adopted the same approach with its Digital Oil Field program, and the entire energy industry is moving in this direction.

How Drone Simulation Is Redefining Remote Work and Rescue

Drones are the closest thing we have to a remote viewing machine, and the technology keeps getting better every year.

A drone flying over a disaster zone sends back video, but modern systems do something much smarter by building a three dimensional model of the entire area in real time. The operator can examine that model from any angle, zoom in on specific details, measure distances, and mark locations for rescue crews.

This is not just watching a video but having your eyes and your sense of space teleported into the drone.

Companies like DJI have made this technology affordable and portable, while Lockheed Martin builds advanced systems for military and industrial use. A search and rescue team can cover miles of rough terrain without walking it, and a construction inspector can examine a bridge from every angle without climbing it. The drone becomes your remote body, and distance shrinks to almost nothing.

The training side is just as impressive, because drone pilots learn to fly in simulated environments that look and feel like real cities or forests. They can practice dangerous maneuvers without risking expensive equipment, and they can experience mechanical failures and emergency situations that would be too dangerous to create in real life.

By the time they fly a real mission, they have already handled multiple problems in simulation, and the training can happen remotely while the skills and learnings are embedded with the pilot regardless the mission.

The Rise of Simulation‑Driven Production

BMW’s virtual factory is one of the best examples of how simulation is collapsing distance in the real world. The company has digital twins of more than 30 production sites, and every new vehicle model goes through the virtual factory before anyone touches a real assembly line. BMW projects that this approach will reduce production planning costs by up to 30 percent, and between now and 2027, the company will integrate more than 40 new or updated vehicles into its global production system. Every single one of those vehicles will be tested in simulation first.

The collision check process is the most dramatic example of time savings. For every new car launch, BMW must verify that the vehicle fits on the production line and does not hit anything as it moves through the factory. In the past, a real vehicle body had to be manually guided through the production lines over several weekends, and the paint shop sometimes required completely emptying and cleaning giant dip coating tanks. That process cost enormous amounts of time and money, but today the same check happens in three days of simulation.

What used to take almost four weeks now takes three days, and the results are more accurate.

BMW has also opened the world’s first AI controlled car factory in Debrecen, Hungary, where the entire facility was designed and tested as a complete digital twin before construction even began. Once fully operational, more than 1,000 robots and human workers will be guided by AI systems, and the factory will emit 90 percent less carbon dioxide than traditional plants. As BMW’s head of production put it, the factory was designed and built entirely around a digital first strategy that offers a new dimension in efficient production.

Boston Dynamics makes some of the most famous robots in the world. The Spot robot, which is a four legged machine about the size of a large dog, recently learned to perform continuous backflips through the power of reinforcement learning. That sounds like a party trick, but the training behind that backflip has made Spot much better at surviving in dangerous real world environments.

Here is how it worked. The research team first trained Spot in simulation by running millions of training cycles inside a computer before ever trying the moves with a real robot. When they first tried to transfer the simulation results to the real robot, the team admitted that the results failed almost every time, and engineer Arun Kumar said they fell hundreds of times on gymnastics mats before daring to let Spot try the moves on concrete. That careful process paid off in a big way, because the reinforcement learning training increased Spot’s posture adjustment speed by 40 percent when the robot falls or slips. That helps protect the tens of thousands of dollars worth of sensors mounted on its back.

The simulation training also produced some unexpected benefits. Spot’s walking gait became more natural and more similar to a real dog, joint swing was reduced by 15 percent, and limb coordination on uneven terrain improved by 22 percent. This breakthrough has real commercial value, because Boston Dynamics applied the new algorithm to high risk scenarios like oil pipeline inspections.

One energy client reported a 67 percent reduction in Spot’s accident rate when walking through slippery pipelines, and as one engineer put it, the goal is not backflips but teaching the robot to predict falls so it can protect itself better than humans ever could.

By the time a Boston Dynamics robot enters a real job site, it has already lived in that environment for millions of simulated hours.

How Gaming Tech Is Making Distance Disappear

The technology that made all of this possible was not built for industry but for play. Video game engines were designed to make entertainment more fun, and nobody at those companies sat around thinking about how to improve oil rig inspections or car factory planning. But that is exactly what happened.

The same tools that let you explore a fantasy world or race a car through a city are now letting engineers explore digital twins of real factories and doctors practice surgeries on virtual patients.

Play led to work, entertainment led to infrastructure, and fun led to something genuinely useful. More than 300 companies are already using this technology, and that number will grow into the thousands.

The results are already impressive, with 90 percent reductions in testing time, 50 percent cuts in inspection costs, and 67 percent drops in accident rates.

Distance is collapsing in a practical and everyday way, and the industrialization of presence is already here. The best part is that the same tools that brought you your favorite video game are now bringing the world closer together and solving real problems.

Helping Companies Collapse Risk Through Simulation

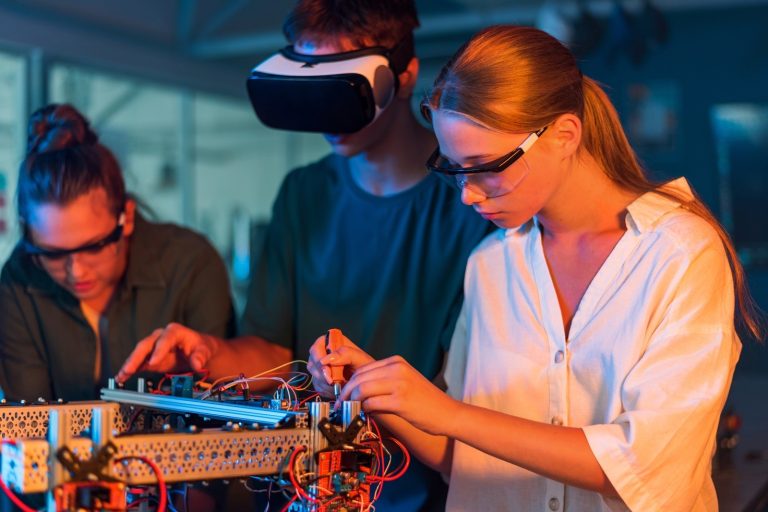

Michael Sorrenti and his team at GAME PILL apply the same simulation principles reshaping healthcare, energy, and manufacturing to one of the most overlooked problems in business: preventable failure. By turning safety training into immersive, real-time simulation environments, they allow workers to operate inside high-risk scenarios such as equipment failure, emergency response, and system breakdowns without physical consequences.

With 26+ years building interactive systems for brands like Disney, Marvel, and Nickelodeon, the team brings the engagement architecture of games into industrial environments. The result is not just better training, but a shift in capability.

Experience is no longer limited to what happens on the job. It can be simulated, repeated, and mastered before reality demands it.

- NVIDIA. “NVIDIA Omniverse: Real-Time Simulation and Collaboration Platform.” NVIDIA, https://www.nvidia.com/en-us/omniverse/. Accessed 23 Apr. 2026.

- NVIDIA. “BMW Group Uses NVIDIA Omniverse for Factory Planning.” NVIDIA, https://www.nvidia.com/en-us/case-studies/bmw-group-omniverse/. Accessed 23 Apr. 2026.

- BMW Group. “Digitalisation in Production.” BMW Group, https://www.bmwgroup.com/en/innovation/digitalisation/production.html. Accessed 23 Apr. 2026.

- BP. “BP Starts Production at Argos Platform.” BP, https://www.bp.com/en/global/corporate/news-and-insights/press-releases/bp-starts-production-at-argos-platform.html. Accessed 23 Apr. 2026.

- Chevron. “Digital Transformation.” Chevron, https://www.chevron.com/technology/digital-transformation. Accessed 23 Apr. 2026.

- IBM. “What Is a Digital Twin?” IBM, https://www.ibm.com/topics/what-is-a-digital-twin. Accessed 23 Apr. 2026.

- Intuitive Surgical. “Da Vinci Surgical System.” Intuitive Surgical, https://www.intuitive.com/en-us/products-and-services/da-vinci. Accessed 23 Apr. 2026.

- DJI. “DJI Enterprise: Commercial Drone Solutions.” DJI, https://www.dji.com/enterprise. Accessed 23 Apr. 2026.

- Boston Dynamics. “Spot Robot.” Boston Dynamics, https://www.bostondynamics.com/products/spot. Accessed 23 Apr. 2026.

- PwC. “The Effectiveness of Virtual Reality Soft Skills Training in the Enterprise.” PwC, https://www.pwc.com/us/en/tech-effect/emerging-tech/virtual-reality-study.html. Accessed 23 Apr. 2026.

#Simulation #DigitalTwin #AI #VirtualReality #AugmentedReality #IndustrialInnovation #FutureOfWork #Automation #SmartManufacturing #EnergyTech #HealthcareInnovation #Robotics #DroneTechnology #GameDevelopment #UnrealEngine #Unity3D #NVIDIA #Omniverse #PredictiveMaintenance #EnterpriseAI #TrainingAndDevelopment #SafetyTraining #RiskManagement #InnovationStrategy #TechTrends